I finally got a right-sized flavor for an OpenShift deployment: 25 GB Disk, 4 VCPU, 16 GB Ram. With that, I tore down the old cluster and tried to redeploy. Right now, the deploy is failing at the stage of the controller nodes querying the API port. What is going on?

Here is the reported error on the console:

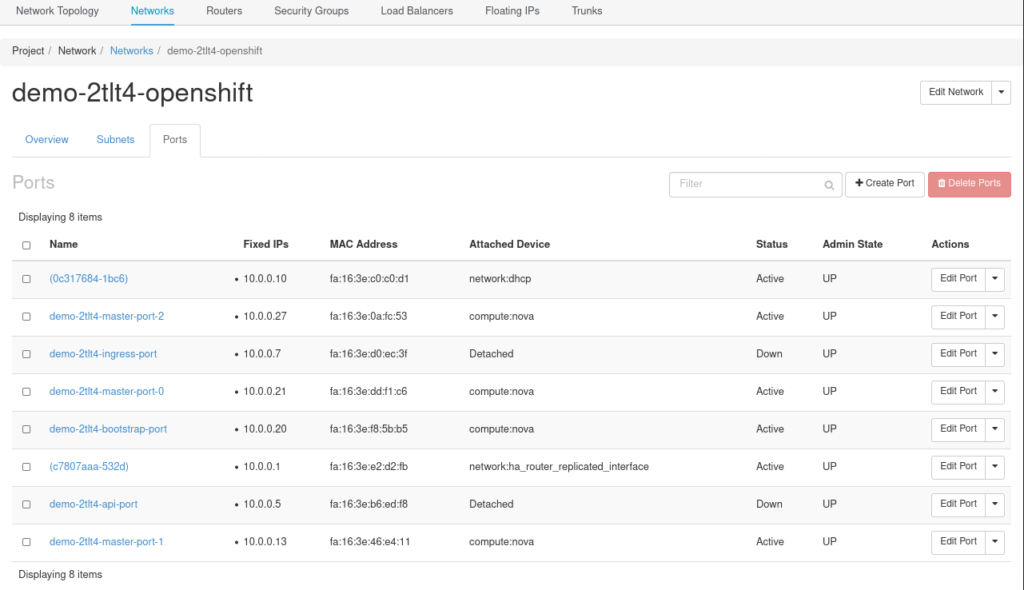

The IP address of 10.0.0.5 is attached to the following port:

$ openstack port list | grep "0.0.5" | da4e74b5-7ab0-4961-a09f-8d3492c441d4 | demo-2tlt4-api-port | fa:16:3e:b6:ed:f8 | ip_address='10.0.0.5', subnet_id='50a5dc8e-bc79-421b-aa53-31ddcb5cf694' | DOWN | |

That final “DOWN” is the port state. It is also showing as detached. It is on the internal network:

Looking at the installer code, the one place I can find a reference to the api_port is in the template data/data/openstack/topology/private-network.tf used to build the value openstack_networking_port_v2. This value is used quite heavily in the rest of the installers’ Go code.

Looking in the terraform data built by the installer, I can find references to both the api_port and openstack_networking_port_v2. Specifically, there are several object of type openstack_networking_port_v2 with the names:

$ cat moc/terraform.tfstate | jq -jr '.resources[] | select( .type == "openstack_networking_port_v2") | .name, ", ", .module, "\n" ' api_port, module.topology bootstrap_port, module.bootstrap ingress_port, module.topology masters, module.topology |

On a baremetal install, we need an explicit A record for api-int.<cluster_name>.<base_domain>. That requirement does not exist for OpenStack, however, and I did not have one the last time I installed.

api-int is the internal access to the API server. Since the controllers are hanging trying to talk to it, I assume that we are still at the stage where we are building the control plane, and that it should be pointing at the bootstrap server. However, since the port above is detached, traffic cannot get there. There are a few hypotheses in my head right now:

- The port should be attached to the bootstrap device

- The port should be attached to a load balancer

- The port should be attached to something that is acting like a load balancer.

I’m leaning toward 3 right now.

The install-config.yaml has the line:

octaviaSupport: “1”

But I don’t think any Octavia resources are being used.

$ openstack loadbalancer pool list $ openstack loadbalancer list $ openstack loadbalancer flavor list Not Found (HTTP 404) (Request-ID: req-fcf2709a-c792-42f7-b711-826e8bfa1b11) |

Hi.

I was behind the networking for baremetal which later got ported to OpenStack and oVirt. IIRC, in the Openstack port the API port should not be attached, simply should be in the allowed address pairs of the masters. This way, keepalived can move it around the bootstrap and masters as needed. The movement is:

– Bootstrap: This is where the api-int starts and where it stays until at least one master API is up. From here the masters retrieve the MachineConfigServer served ignition files.

– Masters: In the masters is where it finally settles (and moves as soon as it can). Workers will retrieve the ignition from MCS running on masters.

I would recommend you log in into the bootstrap node and verify that the .5 adddress is configured. If it isn’t, check the keepalived logs with crictl. If it is, check if MCS is running.