I have two distinct Kubernetes clusters I work with on a daily basis. One is a local vagrant bases set of VM built by the Kubevirt code base. The other is a “baremetal” install of OpenShift Origin on a pair of Fedora workstation in my office. I want to be able to switch back and forth between them.

When you run the kubectl command without specifying where the application should look for the configuration file, it defaults to looking in $HOME/.kube/config. This file maintains the configuration values for a handful of object types. Here is an abbreviated look at the one set up by origin.

apiVersion: v1 clusters: - cluster: api-version: v1 certificate-authority-data: LS0...LQo= server: https://munchlax:8443 name: munchlax:8443 contexts: - context: cluster: munchlax:8443 namespace: default user: system:admin/munchlax:8443 name: default/munchlax:8443/system:admin - context: cluster: munchlax:8443 namespace: kube-system user: system:admin/munchlax:8443 name: kube-system/munchlax:8443/system:admin current-context: kube-system/munchlax:8443/system:admin kind: Config preferences: {} users: - name: system:admin/munchlax:8443 user: client-certificate-data: LS0...tLS0K client-key-data: LS0...LS0tCg== |

Note that I have ellided the very long cryptographic entries for certificate-authority-data, client-certificate-data, and client-key-data.

First up is an array of clusters. The minimal configuration for each here provides a servername, which is the remote URL to use, some set of certificate authority data, and a name to be used for this configuration elsewhere in this file.

At the bottom of the file, we see a chunk of data for user identification. Again, the user has a local name

system:admin/munchlax:8443 |

With the rest of the identifying information hidden away inside the client certificate.

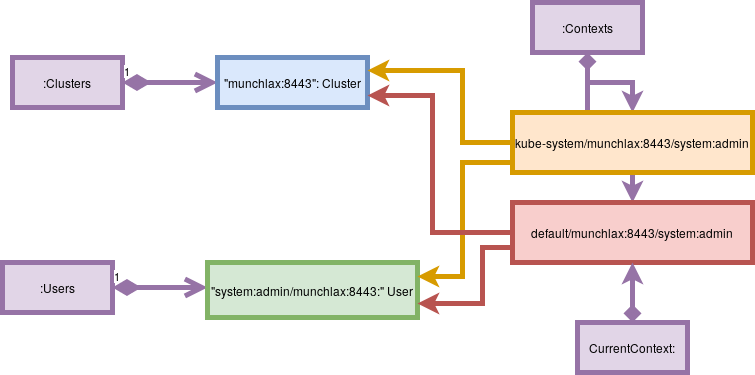

These two entities are pulled together in a Context entry. In addition, a context entry has a namespace field. Again, we have an array, with each entry containing a name field. The Name of the context object is going to be used in the current-context field and this is where kubectl starts its own configuration.  Here is an object diagram.

The next time I run kubectl, it will read this file.

- Based on the value of CurrentContext, it will see it should use the kube-system/munchlax:8443/system:admin context.

- From that context, it will see it should use

- the system:admin/munchlax:8443 user,

- the kube-system namespace, and

- the URL https://munchlax:8443 from the munchlax:8443 server.

Below is a similar file from the kubevirt set up, found on my machine at the path ~/go/src/kubevirt.io/kubevirt/cluster/vagrant/.kubeconfig

apiVersion: v1 clusters: - cluster: certificate-authority-data: LS0...LS0tLQo= server: https://192.168.200.2:6443 name: kubernetes contexts: - context: cluster: kubernetes user: kubernetes-admin name: kubernetes-admin@kubernetes current-context: kubernetes-admin@kubernetes kind: Config preferences: {} users: - name: kubernetes-admin user: client-certificate-data: LS0...LS0tLQo= client-key-data: LS0...LS0tCg== |

Again, I’ve ellided the long cryptographic data. This file is organized the same way as the default one. kubevirt uses it via a shell script that resolves to the following command line:

${KUBEVIRT_PATH}cluster/vagrant/.kubectl --kubeconfig=${KUBEVIRT_PATH}cluster/vagrant/.kubeconfig "$@" |

which overrides the default configuration location. What if I don’t want to use the shell script? I’ve manually merged the two files into a single ~/.kube/config. The resulting one has two users,

- system:admin/munchlax:8443

- kubernetes-admin

two clusters,

- munchlax:8443

- kubernetes

and three contexts.

- default/munchlax:8443/system:admin

- kube-system/munchlax:8443/system:admin

- kubernetes-admin@kubernetes

With current-context: kubernetes-admin@kubernetes:

$ kubectl get pods NAME READY STATUS RESTARTS AGE haproxy-686891680-k4fxp 1/1 Running 0 15h iscsi-demo-target-tgtd-2918391489-4wxv0 1/1 Running 0 15h kubevirt-cockpit-demo-1842943600-3fcf9 1/1 Running 0 15h libvirt-199kq 2/2 Running 0 15h libvirt-zj6vw 2/2 Running 0 15h spice-proxy-2868258710-l85g2 1/1 Running 0 15h virt-api-3813486938-zpd8f 1/1 Running 0 15h virt-controller-1975339297-2z6lc 1/1 Running 0 15h virt-handler-2s2kh 1/1 Running 0 15h virt-handler-9vvk1 1/1 Running 0 15h virt-manifest-322477288-g46l9 2/2 Running 0 15h |

but with current-context: kube-system/munchlax:8443/system:admin

$ kubectl get pods NAME READY STATUS RESTARTS AGE tiller-deploy-3580499742-03pbx 1/1 Running 2 8d youthful-wolverine-testme-4205106390-82gwk 0/1 CrashLoopBackOff 30 2h

There is support in the kubectl executable for configuration:

[ayoung@ayoung541 helm-charts]$ kubectl config get-contexts CURRENT NAME CLUSTER AUTHINFO NAMESPACE kubernetes-admin@kubernetes kubernetes kubernetes-admin default/munchlax:8443/system:admin munchlax:8443 system:admin/munchlax:8443 default * kube-system/munchlax:8443/system:admin munchlax:8443 system:admin/munchlax:8443 kube-system [ayoung@ayoung541 helm-charts]$ kubectl config current-context kubernetes-admin@kubernetes kube-system/munchlax:8443/system:admin [ayoung@ayoung541 helm-charts]$ kubectl config get-contexts CURRENT NAME CLUSTER AUTHINFO NAMESPACE default/munchlax:8443/system:admin munchlax:8443 system:admin/munchlax:8443 default * kube-system/munchlax:8443/system:admin munchlax:8443 system:admin/munchlax:8443 kube-system kubernetes-admin@kubernetes kubernetes kubernetes-admin

The openshift login command can add additional configuration information.

$ oc login Authentication required for https://munchlax:8443 (openshift) Username: ayoung Password: Login successful. You have one project on this server: "default" Using project "default". |

This added the following information to my .kube/config

under contexts:

- context: cluster: munchlax:8443 namespace: default user: ayoung/munchlax:8443 name: default/munchlax:8443/ayoung |

under users:

- name: ayoung/munchlax:8443 user: token: 24i...o8_8 |

This time I elided the token.

It seems that it would be pretty easy to write a tool for merging two configuration files. The caveats I can see include:

- don’t duplicate entries

- ensure that two entries with the same name but different values trigger an error