The process of deploying the overcloud goes through several technologies. Here’s what I’ve learned about tracing it.

I am not a Heat or Tripleo developer. I’ve just started working with Tripleo, and I’m trying to understand this based on what I can gather, and the documentation out there. And also from the little bit of experience I’ve had working with Tripleo. Anything I say here might be wrong. If someone that knows better can point out my errors, please do so.

[UPDATE]: Steve Hardy has corrected many points, and his comments have been noted inline.

To kick the whole thing off in the simplest case, you would run the command openstack overcloud deploy .

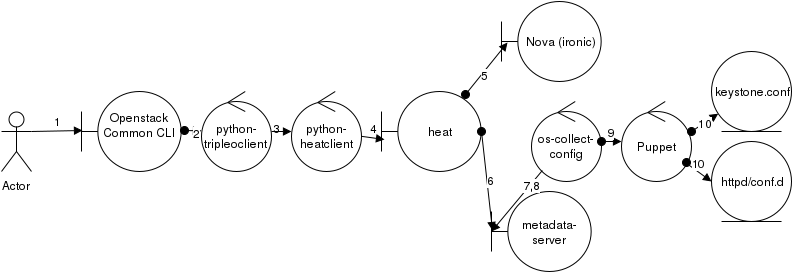

Roughly speaking, here is the sequence (as best as I can tell)

-  User types openstack overcloud deploy on the command line

- This calls up the common cli, which parses the command, and matches the tripleo client with the overcloud deploy subcommand.

- tripleo client is a thin wrapper around the Heat client, and calls the equivalent of heat stack-create overcloud

- python-heatclient (after Keystone token stuff) calls the Heat API server with the URL and data to do a stack create

- Heat makes the appropriate calls to Nova (running the Ironic driver) to activate a baremetal node and deploy the appropriate instance on it.

- Before the node is up and running, Heat has posted Hiera data to the metadata server.

- The newly provisioned machine will run cloud-init

which in turn runs os-collect-config.

[update] Steve Hardy’s response:This isn’t strictly accurate – cloud-init is used to deliver some data that os-collect-config consumes (via the heat-local collector), but cloud-init isn’t involved with actually running os-collect-config (it’s just configured to start in the image).

- os-collect-config will start polling for changes to the metadata.

- [update] os-collect-config will start calling

Puppet Apply based on the hiera data[UPDATE] os-refresh-config only, which then invokes a script that runs puppet. .

Steve’s note:os-collect-config never runs puppet, it runs os-refresh-config only, which then invokes a script that runs puppet.

- The Keystone Puppet module will set values in the Keystone config file, httpd/conf.d files, and perform other configuration work.

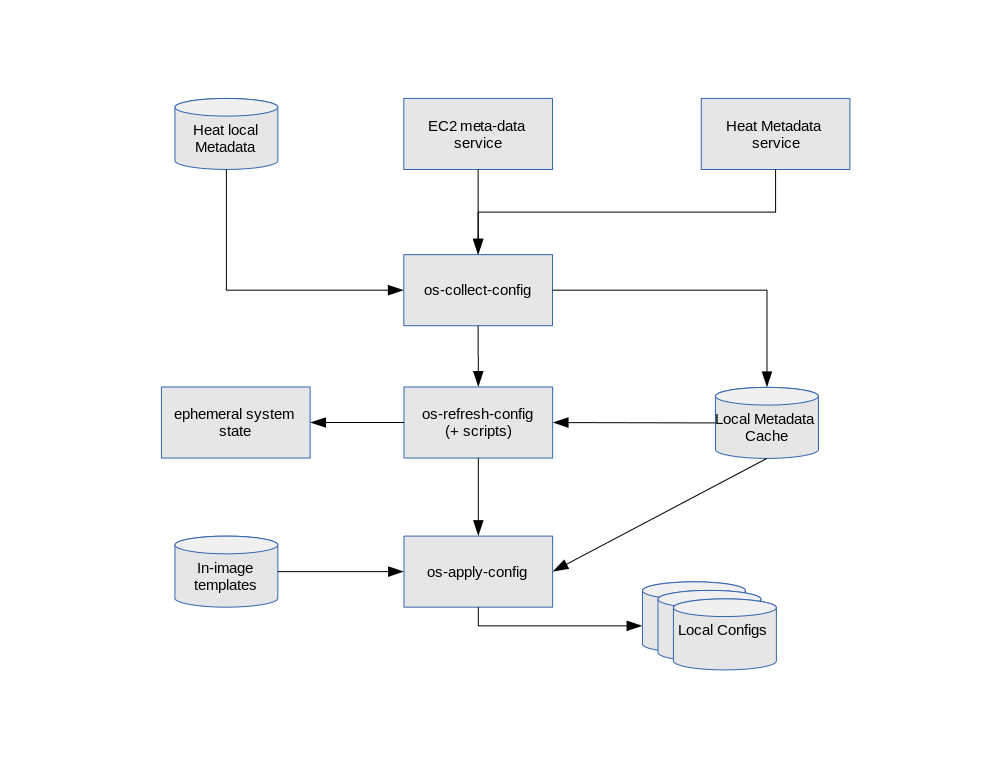

Here is a diagram of how os-collect-config is designed

When a controller image is built for Tripleo, Some portion of the Hiera data is stored in /etc/puppet/. There is a file /etc/puppet/hiera.yaml (which looks a lot like /etc/hiera.yaml, an RPM controlled file) and sub file in /etc/puppet/hieradata such as

/etc/puppet/hieradata/heat_config_ControllerOvercloudServicesDeployment_Step4.json

UPDATE: Response from Steve Hardy

This is kind-of correct – we wait for the server to become ACTIVE, which means the OS::Nova::Server resource is declared CREATE_COMPLETE. Then we do some network configuration, and *then* we post the hieradata via a heat software deployment.

So, we post the hieradata to the heat metadata API only after the node is up and running, and has it’s network configured (not before).

https://github.com/openstack/tripleo-heat-templates/blob/master/puppet/controller.yaml#L610

Note the depends_on – we use that to control the ordering of configuration performed via heat.

However, the dynamic data seems to be stored in /var/lib/os-collect-config/

$ ls -la /var/lib/os-collect-config/*json -rw-------. 1 root root 2929 Jun 16 02:55 /var/lib/os-collect-config/ControllerAllNodesDeployment.json -rw-------. 1 root root 187 Jun 16 02:55 /var/lib/os-collect-config/ControllerBootstrapNodeDeployment.json -rw-------. 1 root root 1608 Jun 16 02:55 /var/lib/os-collect-config/ControllerCephDeployment.json -rw-------. 1 root root 435 Jun 16 02:55 /var/lib/os-collect-config/ControllerClusterDeployment.json -rw-------. 1 root root 36481 Jun 16 02:55 /var/lib/os-collect-config/ControllerDeployment.json -rw-------. 1 root root 242 Jun 16 02:55 /var/lib/os-collect-config/ControllerSwiftDeployment.json -rw-------. 1 root root 1071 Jun 16 02:55 /var/lib/os-collect-config/ec2.json -rw-------. 1 root root 388 Jun 15 18:38 /var/lib/os-collect-config/heat_local.json -rw-------. 1 root root 1325 Jun 16 02:55 /var/lib/os-collect-config/NetworkDeployment.json -rw-------. 1 root root 557 Jun 15 19:56 /var/lib/os-collect-config/os_config_files.json -rw-------. 1 root root 263313 Jun 16 02:55 /var/lib/os-collect-config/request.json -rw-------. 1 root root 1187 Jun 16 02:55 /var/lib/os-collect-config/VipDeployment.json

For each of these files there are two older copies that end in .last and .orig as well.

In my previous post, I wrote about setting Keystone configuration options such as ‘identity/domain_specific_drivers_enabled’: value => ‘True’;. I can see this value set in /var/lib/os-collect-config/request.json with a large block keyed “config”.

When I ran the openstack overcloud deploy, one way that I was able to track what was happening on the node was to tail the journal like this:

sudo journalctl -f | grep collect-config

Looking through the journal output, I can see the line that triggered the change:

... /Stage[main]/Main/Keystone_config[identity/domain_specific_drivers_enabled]/ensure: ...

Hi,

Thanks a for such a nice write up. It’s really helpful.

I’m trying to deploy RHOSP12 on 9 servers(3 controllers, 2 compute with ovsdpdk , 2 compute with sriov and 2 ceph storage nodes). Overcloud nodes are provisioned.

But overcloud stack is failed. Analysed the nested stacks it shows reason just as deployment cancelled. Also the log file /var/log/messages inside the provisoned overcloud nodes shows the below message related t os-collect-config:

Jun 1 23:53:18 controller-2 NetworkManager[1145]: [1527911598.4166] device (p2p2): state change: ip-config -> failed (reason ‘ip-config-unavailable’, sys-iface-state: ‘managed’)

Jun 1 23:53:18 controller-2 NetworkManager[1145]: [1527911598.4168] device (p2p2): Activation: failed for connection ‘System p2p2’

Jun 1 23:53:18 controller-2 NetworkManager[1145]: [1527911598.4175] device (em1): state change: failed -> disconnected (reason ‘none’, sys-iface-state: ‘managed’)

Jun 1 23:53:18 controller-2 NetworkManager[1145]: [1527911598.4200] device (em2): state change: failed -> disconnected (reason ‘none’, sys-iface-state: ‘managed’)

Jun 1 23:53:18 controller-2 NetworkManager[1145]: [1527911598.4222] device (p2p1): state change: failed -> disconnected (reason ‘none’, sys-iface-state: ‘managed’)

Jun 1 23:53:18 controller-2 NetworkManager[1145]: [1527911598.4247] device (p2p2): state change: failed -> disconnected (reason ‘none’, sys-iface-state: ‘managed’)

Jun 1 23:53:19 controller-2 ntpd[17497]: Deleting interface #422 em1, fe80::266e:96ff:fe5f:dcd0#123, interface stats: received=0, sent=0, dropped=0, active_time=43 secs

Jun 1 23:53:19 controller-2 ntpd[17497]: Deleting interface #421 em2, fe80::266e:96ff:fe5f:dcd2#123, interface stats: received=0, sent=0, dropped=0, active_time=43 secs

Jun 1 23:53:19 controller-2 ntpd[17497]: Deleting interface #420 p2p1, fe80::a236:9fff:feef:f72c#123, interface stats: received=0, sent=0, dropped=0, active_time=43 secs

Jun 1 23:53:19 controller-2 ntpd[17497]: Deleting interface #419 p2p2, fe80::a236:9fff:feef:f72e#123, interface stats: received=0, sent=0, dropped=0, active_time=43 secs

Jun 1 23:53:29 controller-2 os-collect-config: /var/lib/os-collect-config/local-data not found. Skipping

Jun 1 23:53:29 controller-2 os-collect-config: No local metadata found ([‘/var/lib/os-collect-config/local-data’])

Jun 1 23:53:59 controller-2 os-collect-config: /var/lib/os-collect-config/local-data not found. Skipping

Jun 1 23:53:59 controller-2 os-collect-config: No local metadata found ([‘/var/lib/os-collect-config/local-data’])

Jun 1 23:54:29 controller-2 os-collect-config: /var/lib/os-collect-config/local-data not found. Skipping

Jun 1 23:54:29 controller-2 os-collect-config: No local metadata found ([‘/var/lib/os-collect-config/local-data’])

Jun 1 23:54:59 controller-2 os-collect-config: /var/lib/os-collect-config/local-data not found. Skipping

Jun 1 23:54:59 controller-2 os-collect-config: No local metadata found ([‘/var/lib/os-collect-config/local-data’])

ANY sort of help or inputs for this? Please suggest

I’m not familiar with that error. I’d ask in #tripleo in Freenode IRC.