The Policy management tool I’m working on really needs revision and change management. Since I’ve spent so much time with Git, it affects my thinking about change management things. So, here is my attempt to lay out my current thinking for implementing a git-like scheme for managing policy rules.

A policy line is composed of two chunks of data. A Key and a Value. The keys are in the form

identity:create_user.

Additionally, the keys are scoped to a specific service (Keystone, Nova, etc).

The value is the check string. These are of the form

role:admin and project_id=target.project_id

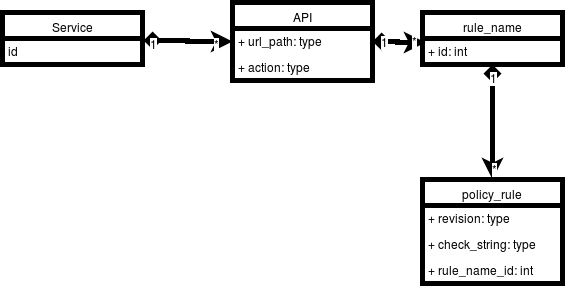

It is the check string that is most important to revision control. This lends itself to an entity diagram like this:

Whether each of these gets its own table remains to be seen. The interesting part is the rule_name to policy_rule mapping.

Lets state that the policy_rule table entries are immutable. If we want to change policy, we add a new entry, and leave the old ones in there. The new entry will have a new revision value. For now, lets assume revisions are integers and are monotonically increasing. So, when I first upload the Keystone policy.json file, each entry gets a revision ID of 1. In this example, all check_strings start off as are “is_admin:True”

Now lets assume I modify the identity:create_user rule. I’m going to arbitrarily say that the id for this record is 68. I want to Change it to:

role:admin and domain_id:target.domain_id

So we can do some scope checking. This entry goes into the policy_rule table like so:

| rule_name_id | check_string | revision |

|---|---|---|

| 68 | is_admin:True | 1 |

| 68 | role:admin and domain_id:target.domain_id | 2 |

From a storage perspective this is quite nice, but from a “what does my final policy look like” perspective it is a mess.

In order to build the new view, we need sql along the lines of

select * from policy_rule where revision = ?

Lets call this line_query and assume that when we call it, the parameter is substituted for the question mark. We would then need code like this pseudo-code:

doc = dict() for revision in 1 to max: for result in line_query.execute(revision): index = result['rule_name_id'] doc[index] = result.check_string() |

This would build a dictionary layer by layer through all the revisions.

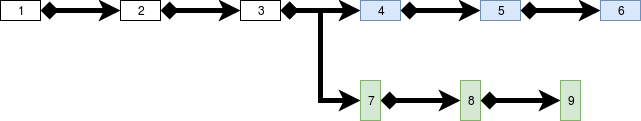

So far so good, but what happens if we decided to revert, and then to go a different direction? Right now, we have a revision chain like this:

And if we keep going, we have,

But what happens if 4 was a mistake? We need to revert to 6 and create a new new branch.

We have two choices. First, we could be destructive and delete all of the lines in revision 4, 5, and 6. This means we can never recreate the state of 6 again.

What if we don’t know that 4 is a mistake? What if we just want to try another route, but come back to 4,5, and 6 in the future?

We want this:

But how will we know to take the branch when we create the new doc?

Its a database! We put it in another table.

| revision_id | revision_parent_id |

|---|---|

| 2 | 1 |

| 3 | 2 |

| 4 | 3 |

| 5 | 4 |

| 6 | 5 |

| 7 | 3 |

| 8 | 7 |

| 9 | 8 |

In order to recreate revision 9, we use a stack. Push 9 on the stack, then find the row with revision_id 9 in the table, push the revision_parent_id on the stack, and continue until there are no more rows. Then, pop each revision_id off the stack and execute the same kind of pseudo code I posted above.

It is a lot. It is kind of complicated, but it is the type of complicated that Python does well. However, database do not do this kind of iterative querying well. It would take a stored procedure to perform this via a single database query.

Talking through this has encouraged me decide to take another look at using git as the backing store instead of a relational database.